Causal Reasoning in TSFMs: From Forecasting to Decision Support

TSFMs tell you what will happen. Decision-makers need to know what would happen under different actions. Two new research directions — interventional priors and activation transplantation — are giving TSFMs their first taste of causal reasoning, opening a path from passive prediction to active decision support.

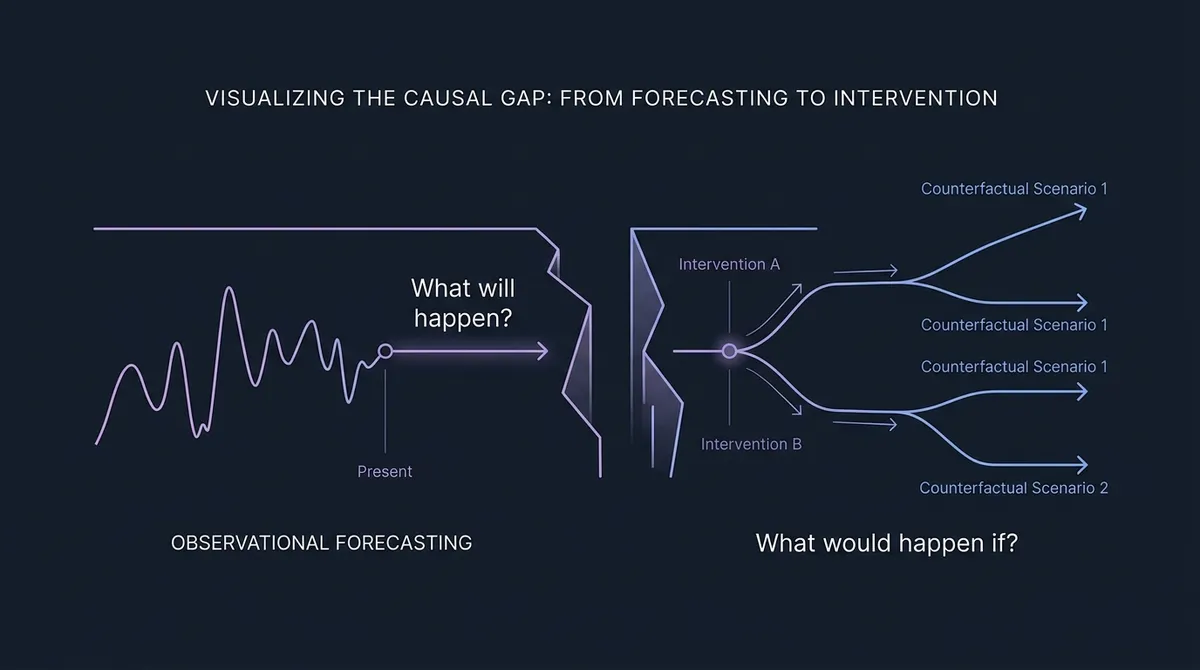

Every production forecasting system eventually runs into the same wall. The model tells you that demand will spike next Tuesday. It does not tell you whether running a promotion caused the spike, whether cancelling the promotion would prevent it, or whether doubling the promotion budget would double the effect. The forecast answers what will happen. The decision-maker needs to know what would happen if.

This gap — between prediction and intervention — is the central limitation of time series foundation models today. Most current TSFMs forecast from observational history: sequences of past values, without identifying the effects of interventions that may have generated them. Some newer systems accept covariates, but covariates as model inputs are not the same as reasoning about what would happen under a different intervention. TSFMs learn statistical patterns — trends, seasonality, cross-series correlations — and they generalize those patterns zero-shot to new domains with strong accuracy on many benchmarks. But they do not distinguish correlation from causation, and they cannot simulate what would happen under a different course of action.

Our companion post on TSFMs vs world models framed this as a gap in the current TSFM paradigm: most TSFM training objectives and datasets are purely observational, while world models represent causal structure and support action-conditioned prediction. But two lines of recent research are beginning to close that gap — not by replacing TSFMs with world models, but by adding causal reasoning capabilities within the same broad architecture family. This post is a technical look at both approaches, what they enable today, and where they point for practitioners.

#The Causal Gap in Current TSFMs

To understand why causal reasoning is hard for TSFMs, consider what they actually learn. A transformer trained on billions of time series data points learns a conditional distribution: given a context window of historical values, predict the distribution of future values. This is a purely observational quantity — it captures P(future | past), the probability of future outcomes given past observations.

Decision-making requires a different quantity: P(future | do(action)), the probability of future outcomes if we intervene and take a specific action. Judea Pearl's do-calculus formalized this distinction decades ago. Observing that umbrellas correlate with rain does not mean removing umbrellas will stop rain. Similarly, observing that a promotion correlates with a demand spike does not tell you whether the promotion caused the spike or whether both were driven by a seasonal pattern.

Current TSFMs conflate these quantities. They produce strong probabilistic forecasts of what will happen given the historical pattern. But they cannot answer counterfactual questions: what would have happened if we had taken a different action? This is not a failure of scale or training data — it is a structural limitation of the observational training objective.

Causal modeling of time series is not new — methods like CRN (Bica et al., 2020) and the Causal Transformer (Melnychuk et al., 2022) estimate individualized treatment effects over time. What is new is applying the foundation model paradigm to this problem: pretraining once on diverse causal data, then performing causal inference in-context on unseen series. Two recent papers, one from late 2025 and one from March 2026, attack this from opposite directions.

#Approach 1: Interventional Priors — Teaching TSFMs Causal Structure from Scratch

The first approach asks: can we train a foundation model to perform causal inference on time series, not just forecasting? CausalTimePrior (Thumm et al., 2026; submitted to the ICLR 2026 TSALM Workshop) extends the Prior-data Fitted Network (PFN) paradigm to temporal causal inference by building a synthetic data generator that produces paired observational and interventional time series.

#The Core Idea

PFNs work by pretraining a transformer on synthetic data sampled from a prior distribution over data-generating processes. At inference time, they perform in-context learning: given a new dataset as context, the pretrained transformer directly outputs predictions without gradient updates. Previous PFN work — Do-PFN (Robertson et al., 2025) and CausalFM (Ma et al., 2025) — demonstrated this for tabular causal inference. CausalTimePrior extends it to temporal data.

The technical challenge is the data generator. Existing time series benchmarks (CausalTime, TimeGraph, CauseMe) provide observational data with ground-truth causal graphs, but none generate interventional data — the paired "what actually happened" and "what would have happened under intervention" trajectories needed to train a causal model. CausalTimePrior fills this gap with a prior over temporal structural causal models (TSCMs).

#How It Works

CausalTimePrior samples random TSCMs, each defined by:

- A time-lagged directed acyclic graph specifying which variables causally influence which others, and at what time lags.

- Nonlinear structural equations drawn from a diverse function family (linear, sinusoidal, polynomial, exponential) with random weights — ensuring the prior covers a wide range of real-world temporal dynamics.

- Multiple intervention types: hard interventions (setting a variable to a fixed value, severing its causal parents), soft interventions (perturbing the mechanism), and time-varying interventions (ramps, steps, sinusoidal profiles).

For each sampled TSCM, the framework generates an observational time series by forward simulation and an interventional time series by applying the specified intervention. The PFN is then trained to predict the interventional outcome given only the observational data, the intervention specification, and the target variable — performing in-context causal effect estimation.

#The Regime-Switching Extension

A particularly notable contribution is support for regime-switching TSCMs, where the causal structure itself changes over time according to a Markov process. In 15% of training examples, the underlying causal graph switches between 2–3 regimes — mirroring real-world scenarios where economic policy shifts, supply chain disruptions, or market structure breaks alter the causal relationships between variables. This is the first synthetic generator to combine regime-switching dynamics with interventional time series generation.

#What It Means for Practitioners

CausalTimePrior is best read, today, as a synthetic-data framework plus a small proof-of-concept causal PFN. The current model is a 2-layer GRU with 128 hidden dimensions, trained in roughly 11 minutes on CPU — a long way from a transformer-scale TSFM. The authors explicitly note that scaling to transformer architectures is future work. Early results on held-out synthetic TSCMs are encouraging (the PFN recovers causal effects in many in-distribution settings), but out-of-distribution performance degrades and the classical baseline PCMCI+ beats the PFN on some metrics.

The significance is architectural, not empirical. CausalTimePrior demonstrates that the PFN recipe — pretraining on synthetic causal data, then performing in-context inference — can extend to temporal data. If a scaled version inherits the zero-shot capabilities of current TSFMs, it could eventually give practitioners a tool that takes in a multivariate time series and estimates "what would variable Y do if we intervened on variable X at time t?" — directly, without building a bespoke causal model. That is a meaningful research direction, but not yet a deployable capability.

#Approach 2: Activation Transplantation — Steering TSFM Hidden States for Scenario Analysis

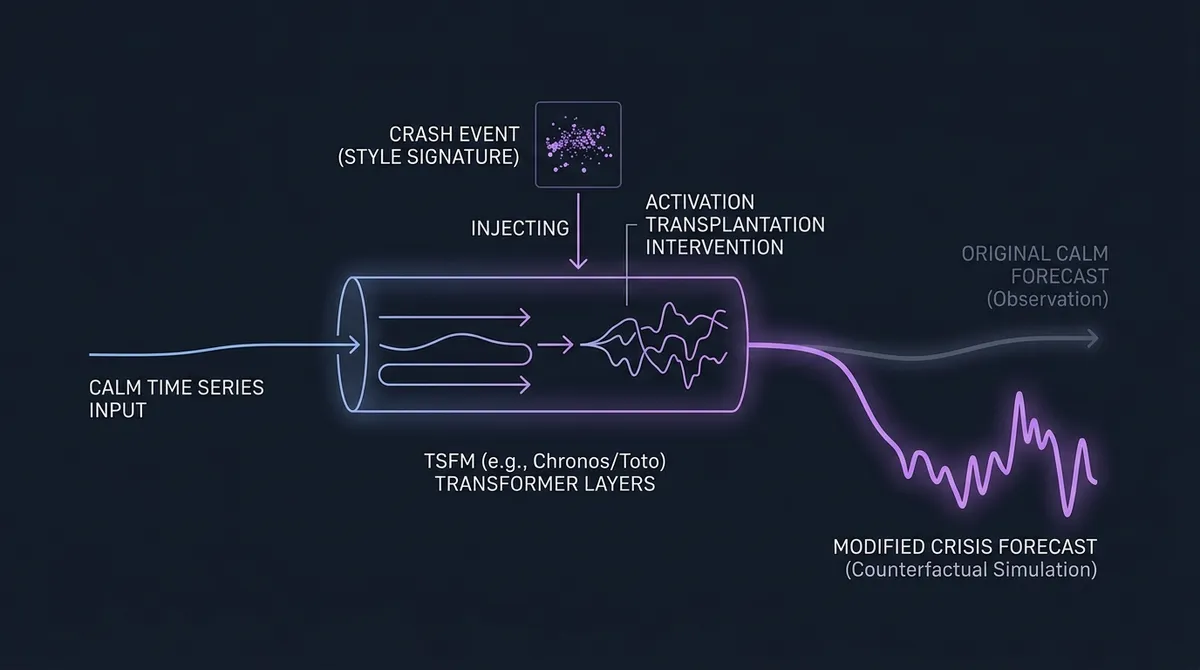

The second approach starts from the opposite end. Instead of training a new causal model, time2time (Sanyal et al., NeurIPS 2025 BERT2S Workshop) asks whether existing TSFMs already encode semantically meaningful regime structure in their hidden states, and whether those representations can be steered for stress-testing.

#The Core Discovery

time2time introduces activation transplantation, a technique borrowed from mechanistic interpretability in LLMs. The procedure works in three steps:

- Extract a semantic signature from a "style" event (e.g., a historical market crash) by computing the mean and standard deviation of hidden-layer activations across the sequence.

- Intervene on a target series (e.g., a calm period) by standardizing its activations and re-scaling them with the style event's statistics — effectively "transplanting" the crash's semantic fingerprint onto the calm period's internal representation.

- Resume the forward pass from the modified activations to generate an intervened forecast.

The results are striking. Injecting crash statistics into a calm period's hidden states deterministically induces downturn predictions. Injecting calm statistics into a crash period suppresses the crash forecast and restores stability. This works bidirectionally and consistently across two architecturally distinct TSFMs: Toto (decoder-only) and Chronos (encoder-decoder, 8M–710M parameters).

#Beyond Binary Steering: Graded Severity

The most significant finding is that TSFMs encode event severity as a continuous, interpretable latent variable. The norm of the activation vector correlates directly with the magnitude of systemic shocks — the model distinguishes the Dot-com crash from the 2008 financial crisis from the 2020 COVID crash, encoding each at a different intensity in its latent space. This is not binary "crash vs. calm" — it is a graded, semantically meaningful representation of event dynamics.

Similarity analysis in a PCA-reduced subspace of latent activations suggests that different crash periods align more closely with each other than with calm periods, pointing to a coherent regime concept in the model's internal geometry. This concept solidifies in middle-to-late transformer layers and is preserved across model scales.

#What It Means for Practitioners

For financial time series and risk management, time2time demonstrates an intriguing research capability: semantic scenario steering. Instead of generating synthetic stress scenarios by hand or replaying historical crises, you can transplant the activation signature of a known crisis onto a current market state and observe how the TSFM's forecast changes. The current real-data experiments are narrow — daily NASDAQ-100 closing values over selected calm and crash windows — so this is a research prototype, not a production tool. But it opens a path toward model-native stress testing that preserves learned dynamics while steering the high-level regime.

More broadly, time2time provides evidence that TSFMs develop structured internal representations of temporal concepts, not just surface-level curve fits. This connects to the finding by Rohekar et al. (ICML 2025) that autoregressive transformers implicitly learn causal world models underlying their next-token predictions — though that work was demonstrated in the controlled Othello game environment, not on continuous time series.

#Two Paths, One Direction

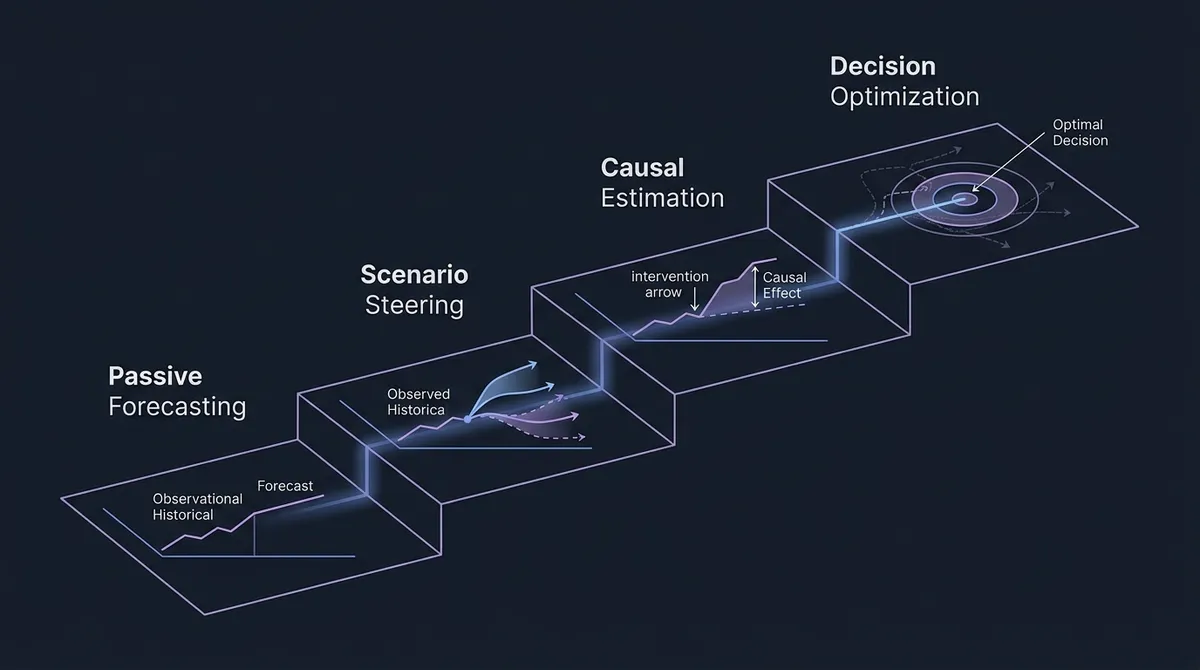

These two approaches are complementary. CausalTimePrior works at the training level: it builds models that explicitly learn causal structure from interventional data, targeting zero-shot causal effect estimation on new time series. time2time works at the inference level: it exploits the regime structure that existing TSFMs have already learned, enabling semantic scenario steering without retraining.

Together, they trace a path from passive forecasting to active decision support:

| Stage | Capability | Example |

|---|---|---|

| Passive forecasting (current TSFMs) | P(future | past) | "Demand will spike next Tuesday" |

| Scenario steering (time2time) | "What if this regime?" | "If a 2008-scale shock hit now, demand would drop 40%" |

| Causal effect estimation (CausalTimePrior) | P(future | do(action)) | "Running the promotion causes a 12% demand increase" |

| Decision optimization (future) | Maximize expected utility under costs and constraints | "Run the promotion at 75% budget for optimal margin" |

Current TSFMs sit at Stage 1. time2time enables Stage 2 for existing models. CausalTimePrior's early results suggest a route toward Stage 3 on synthetic benchmarks, though real-world validation remains ahead. Stage 4 — where causal TSFMs become the quantitative backbone of optimization and planning systems — is the long-term research horizon.

#Why TSFMs Are the Right Foundation

One might ask: if you need causal reasoning, why not switch to world models entirely? Our world models comparison explains the practical answer in detail, but the causal reasoning angle adds a structural argument.

TSFMs have three properties that make them uniquely suited as the numerical backbone for decision systems:

Uncertainty estimation. Conformal prediction and quantile regression give TSFMs a path to uncertainty estimates over observable values — and while calibration quality varies across models and datasets, the best current TSFMs produce tighter and better-calibrated intervals than many baselines. Decision-making under uncertainty requires knowing not just the expected outcome but the range of plausible outcomes. World models predict in latent space, making calibrated uncertainty over observables significantly harder.

Zero-shot generalization. A causal TSFM that inherits the zero-shot capabilities of current models could perform causal inference on new domains without domain-specific causal graphs. CausalTimePrior's in-context learning approach targets exactly this property: the model sees a new multivariate series and attempts to estimate causal effects without prior knowledge of the domain's causal structure. Whether this works beyond synthetic benchmarks is an open question, but the architectural compatibility is clear.

Production infrastructure. TSFMs already have mature serving pipelines, GPU optimization, and benchmark ecosystems. Adding causal capabilities to this existing infrastructure is an incremental extension, not a rebuild. Deploying a world model for causal time series reasoning would require building an entirely new stack.

The key insight is that causal reasoning does not require abandoning the TSFM paradigm. It requires extending the training data (interventional priors), the inference procedure (activation transplantation), or both. The underlying architecture family — sequential models processing numerical data — is preserved.

#Open Challenges

Neither approach is production-ready, and several hard problems remain.

Identifiability. CausalTimePrior trains on synthetic TSCMs where the true causal graph is known. Real-world time series have unknown causal structure, confounders, and measurement error. Whether in-context causal inference can handle these complications at scale is an open empirical question.

Scalability of activation transplantation. time2time demonstrates semantic steering with binary regime concepts (crash vs. calm). Extending this to fine-grained causal interventions — "what if we raised prices 5% in region A but not region B?" — requires a more granular understanding of how specific causal factors are encoded in hidden states.

Bridging observational and interventional distributions. CausalTimePrior's PFN approach assumes the model can learn the mapping from observational to interventional distributions. For complex real-world systems with high-dimensional confounding, this mapping may require significantly more capacity and training diversity than current experiments suggest.

Integration with existing TSFMs. Both approaches currently operate in isolation. CausalTimePrior trains a new model; time2time post-hoc manipulates an existing one. A unified architecture that combines the zero-shot forecasting performance of current TSFMs with native causal reasoning capabilities remains to be built.

#What Practitioners Should Do Now

For most forecasting workloads, the immediate action is: nothing different. TSFMs are strong tools for production forecasting on many workloads, and causal capabilities are not yet mature enough for deployment.

But three near-term opportunities are worth monitoring:

-

Exploring scenario steering with activation transplantation. If you use Toto or Chronos for financial or risk-sensitive forecasting, time2time's open-source implementation lets you experiment with semantic scenario steering. Transplant crisis signatures onto current data and examine how forecasts shift. This is a research tool, not a production system, but it is a useful diagnostic for understanding what your model has learned about regime dynamics.

-

Evaluating causal assumptions. When a forecast drives a decision, ask whether you are implicitly treating the forecast as causal. If "demand will rise 10%" is being used to justify a supply chain decision, you are assuming the rise will happen regardless of your action. Being explicit about this assumption — and planning for when causal TSFMs can test it — is good practice now.

-

Tracking the CausalTimePrior line of work. The PFN approach to causal time series inference is architecturally compatible with zero-shot deployment. If it scales beyond synthetic benchmarks to real-world datasets, it could become a drop-in tool for causal effect estimation. The CausalTimePrior repository is the one to watch.

#The Bigger Picture

TSFMs were built to predict. The research covered here is the beginning of something more ambitious: TSFMs that can reason about interventions, steer scenarios, and ultimately contribute to decision-making systems. The path from passive prediction to decision support is long, and the evidence so far is preliminary. But for the first time, we can see concrete technical approaches that connect forecasting to causal reasoning within the same model family. The foundation models are useful for prediction. The open question is whether they can learn to answer the harder question: what should we do?

Explore the current generation of TSFMs in our model catalog and 2026 toolkit guide.