The 2026 TSFM Toolkit: Which Foundation Model for Which Job?

With 18+ time series foundation models now available, choosing the right one for your workload is the real challenge. Here's a practitioner's decision framework.

Two years into the time series foundation model era, the paradox of choice has arrived. In 2024, your decision was simple: Chronos or maybe Lag-Llama. By early 2026, there are over a dozen production-ready models spanning autoregressive decoders, efficient encoders, mixture-of-experts architectures, diffusion models, and ultra-compact MLP mixers. Each makes different tradeoffs between accuracy, latency, parameter count, and supported features.

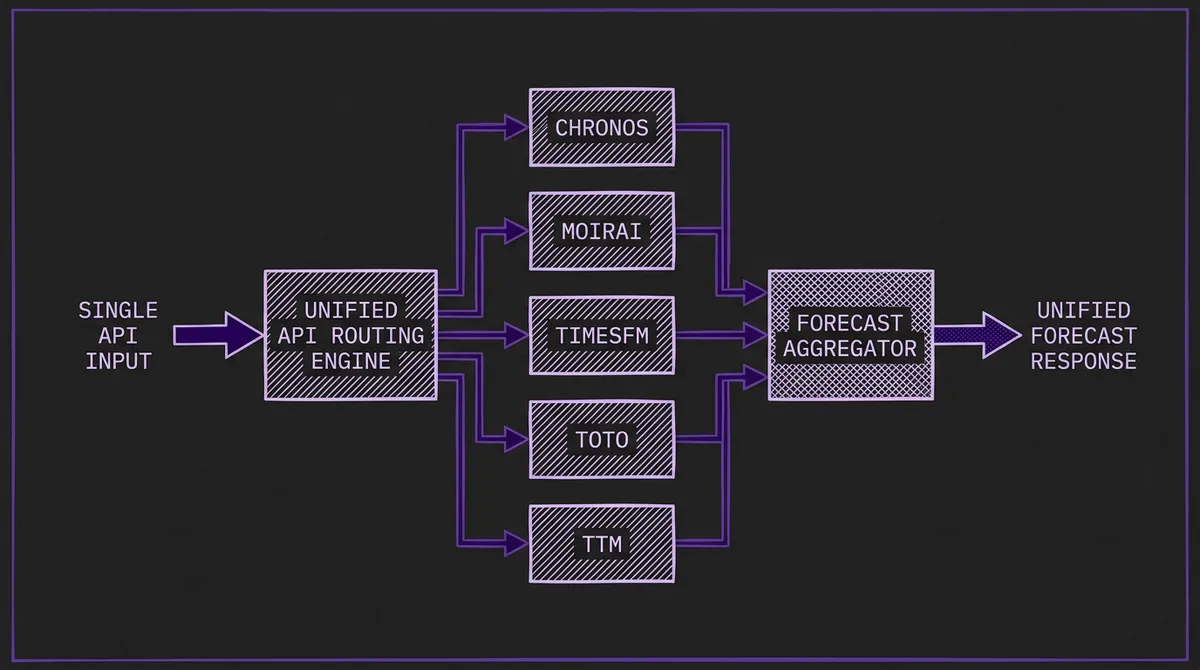

This guide cuts through the noise. We profile the models that matter most for production workloads in 2026, organized by the job you need done. Every model listed here is available through the TSFM.ai unified API, so you can test them head-to-head without managing infrastructure.

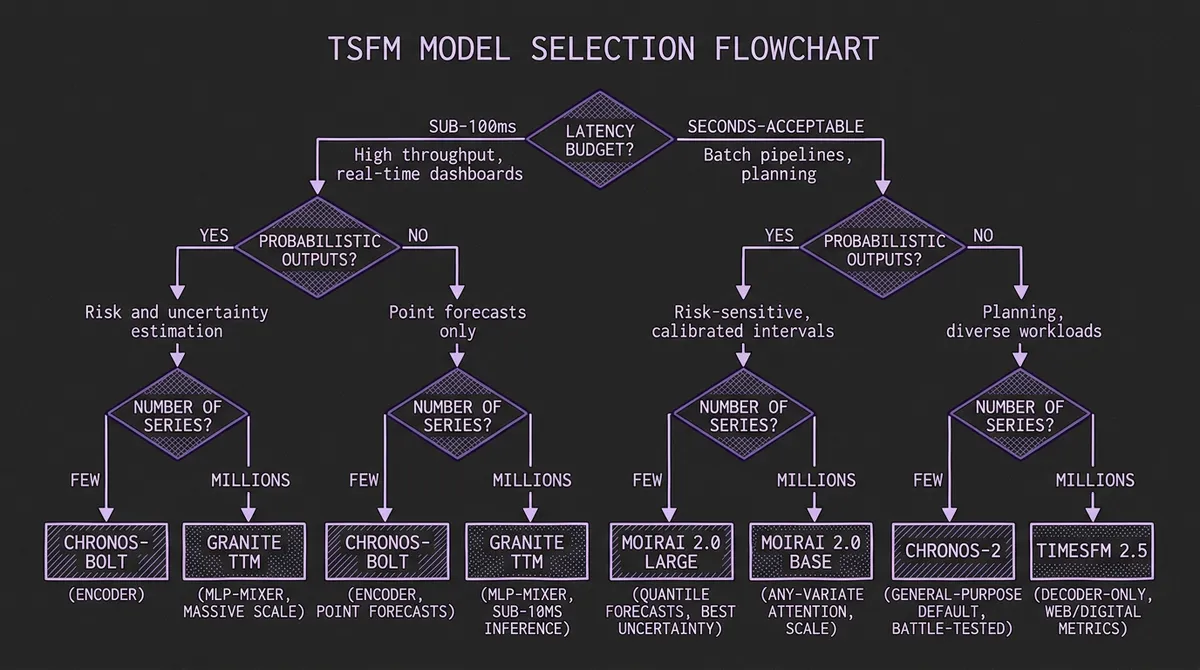

#The Decision Framework

Before picking a model, answer three questions:

- What is your latency budget? Sub-100ms (real-time dashboards, streaming) vs. seconds-acceptable (batch pipelines, planning).

- Do you need probabilistic outputs? Point forecasts for planning, or full prediction intervals for risk and uncertainty quantification?

- How many series are you forecasting? A handful of high-value signals vs. millions of SKUs or sensors.

With those answers, here is where each model family fits.

#Best General-Purpose: Chronos-2 and Chronos-Bolt

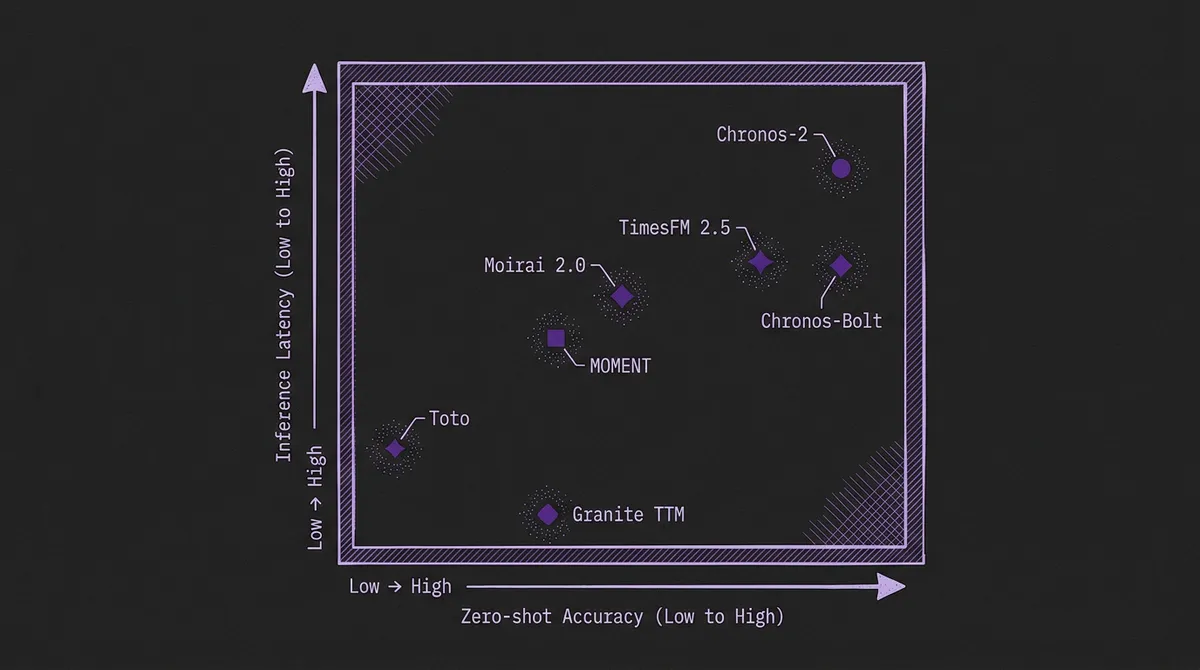

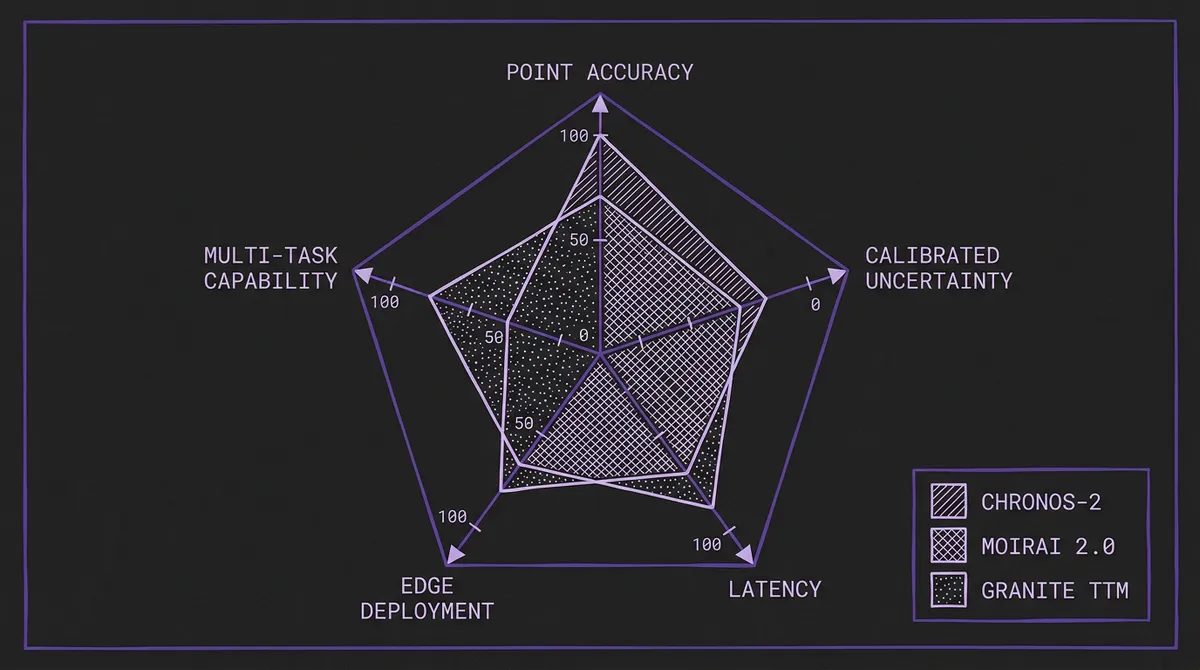

Amazon's Chronos family remains the most battle-tested choice for teams that want one model to handle diverse workloads. Chronos-2 (120M parameters) delivers state-of-the-art zero-shot accuracy on both FEV Bench and GIFT-Eval, excelling on retail, energy, and financial data at frequencies from minutely to yearly.

For latency-sensitive applications, Chronos-Bolt keeps a T5-style encoder-decoder backbone but replaces autoregressive token rollout with direct multi-step quantile forecasting over patched context. The result: comparable accuracy at much lower latency. If your pipeline forecasts millions of series nightly, Bolt is the pragmatic choice.

Best for: Mixed-domain production traffic, teams that want a single strong default.

Tradeoff: Chronos-2 is heavier per series than Bolt because it optimizes for broader universal forecasting rather than lowest-latency direct rollout. Bolt trades a small amount of flexibility for speed.

#Best for Uncertainty Quantification: Moirai 2.0

Salesforce's Moirai family was designed from the ground up for probabilistic forecasting. The Any-Variate attention mechanism handles univariate and multivariate series through a single architecture, and the model produces calibrated quantile forecasts at standard levels (0.1 through 0.9) out of the box.

Moirai 2.0 is the newer decoder-only Moirai line, and the public checkpoint we host is the Small model at roughly 11M parameters. It switches the family to quantile loss, multi-token prediction, and missing-value-aware patch embeddings. For workloads where calibrated prediction intervals matter more than point forecast accuracy, it remains one of the strongest compact open options.

Best for: Risk-sensitive applications, financial forecasting, supply chain planning where understanding downside scenarios is critical.

Tradeoff: Point forecast accuracy can trail Chronos-2 on certain benchmark slices. The multivariate attention mechanism adds latency for univariate-only workloads.

#Best Speed-to-Accuracy Ratio: Google TimesFM 2.5

Google's TimesFM pioneered the patch-based decoder architecture for time series. TimesFM 2.5 (200M parameters) is the current release, offering strong zero-shot performance with a decoder-only design that processes input patches in parallel.

TimesFM's key advantage is its pretraining scale: trained on Google's internal time series corpus spanning Search trends, YouTube metrics, and Cloud monitoring data. This breadth shows on web traffic and digital metrics workloads where TimesFM often edges out competitors.

For teams with tighter latency requirements, the older TimesFM 2.0 500M remains available as a larger but sometimes more accurate alternative.

Best for: Web traffic, digital analytics, SaaS metrics, and workloads with moderate latency tolerance.

Tradeoff: Decoder-only architecture means sequential generation for long horizons. Less calibrated on probabilistic metrics than Moirai.

#Best for Resource-Constrained Environments: IBM Granite TTM

When you need forecasting at the edge, on CPU, or at massive scale with minimal compute, IBM's Granite TTM is the answer. At roughly 1M parameters, it is among the smallest hosted forecasting models in the catalog. The MLP-Mixer architecture avoids both attention and autoregressive generation, which keeps deployment lightweight and CPU-friendly.

TTM punches well above its weight class. On many univariate benchmarks it matches or beats models 100x its size, and the FlowState variant (9.1M parameters) extends this to slightly more complex workloads.

Best for: IoT and manufacturing, edge deployment, batch forecasting millions of series with minimal GPU budget.

Tradeoff: TTM uses context- and horizon-specific checkpoints rather than one universal dense model. More complex or irregular workloads may fit better with larger pretrained families or with IBM's separate FlowState model.

#Best for Observability and Infrastructure: Datadog Toto

Toto (151M parameters) is the only TSFM pretrained specifically on infrastructure monitoring data. Built by Datadog, it was trained on billions of metric time points from production infrastructure: CPU utilization, request latency, error rates, queue depths, and network throughput.

This domain specialization shows. On infrastructure and network traffic forecasting workloads, Toto's zero-shot accuracy matches or exceeds general-purpose models that have been fine-tuned on similar data. For SRE teams building anomaly detection and capacity planning pipelines, Toto should be your starting point.

Best for: DevOps, SRE, infrastructure monitoring, capacity planning.

Tradeoff: Domain-specific pretraining means weaker performance on retail, finance, and other non-infrastructure domains compared to general-purpose alternatives.

#Best for Research and Multi-Task: CMU MOMENT

MOMENT (385M parameters) is unique among TSFMs in supporting forecasting, classification, anomaly detection, and imputation within a single model. Developed at Carnegie Mellon, it treats time series tasks as masked reconstruction problems, making it the closest thing to a "BERT for time series."

For research teams exploring multimodal approaches or building custom task heads, MOMENT's architecture is the most extensible. Its embedding space has also shown strong transfer learning properties for healthcare and scientific time series.

Best for: Research, multi-task pipelines, teams that need more than just forecasting from a single model.

Tradeoff: Jack of all trades, master of none on pure forecasting benchmarks. Larger than necessary if you only need point forecasts.

#The Rising Challengers

Several models deserve attention even if they have not yet achieved the deployment track record of the leaders above.

Sundial (128M) from THUML / Tsinghua University is the first diffusion-based TSFM, generating forecasts through iterative denoising rather than autoregressive generation. Its probabilistic outputs are naturally well-calibrated, and early benchmark results are competitive with Moirai on uncertainty quantification tasks.

Time-MoE from Xiaohongshu applies the mixture-of-experts architecture that revolutionized LLMs to time series. The public TimeMoE-200M checkpoint hosted here reports roughly 0.5B stored parameters while activating about 200M per forward pass, which is the key efficiency claim of the family.

TiRex from NXAI is a compact xLSTM-based zero-shot forecaster that stands out mainly as a non-transformer alternative. It is worth watching if you want strong short- and long-horizon forecasting from a relatively small checkpoint, but the current public release should be treated as a univariate forecasting model rather than a covariate-native system.

PatchTST, originally proposed by Yuqi Nie et al. and hosted here via IBM Granite's ETTh1 checkpoint, pioneered the patch-based approach that most subsequent TSFMs adopted. While not the top performer on current benchmarks, its architecture has been remarkably influential, and it remains a solid baseline.

#Quick Reference Matrix

| Model | Params | Architecture | Best Use Case | Latency | Probabilistic |

|---|---|---|---|---|---|

| Chronos-2 | 120M | Encoder | General purpose | Medium | Yes |

| Chronos-Bolt | 205M | T5 encoder-decoder (direct) | High-throughput | Low | Yes |

| Moirai 2.0 | 11M | Decoder (quantile) | Uncertainty quantification | Medium | Yes (calibrated) |

| TimesFM 2.5 | 200M | Decoder (patched) | Web/digital metrics | Medium | Partial |

| Granite TTM | ~1M | MLP-Mixer | Edge/IoT/batch | Very low | Limited |

| Toto | 151M | Domain-specific | Infrastructure/observability | Low | Yes |

| MOMENT | 385M | Encoder (masked) | Research/multi-task | Medium | Yes |

| Sundial | 128M | Diffusion | Calibrated probabilistic | Higher | Yes (diffusion) |

| Time-MoE | 200M active / 0.5B total | MoE Transformer | Cost-efficient accuracy | Medium | Yes |

#How to Choose

If you are starting from scratch and want one model: Chronos-2 or Chronos-Bolt depending on your latency budget.

If uncertainty matters more than point accuracy: Moirai-2.0-R-Small.

If you are forecasting infrastructure metrics: Toto.

If you need to run on CPU or at the edge: Granite TTM.

If you are building a research pipeline or need multi-task capabilities: MOMENT.

And if you want to test multiple models against your data before committing: that is exactly what TSFM.ai's model routing is designed for. Send the same request to multiple models through our unified API, compare outputs, and let the data tell you which model wins on your specific distribution.

Explore all available models in our catalog, run comparisons in the playground, and check benchmark results for the latest accuracy data.